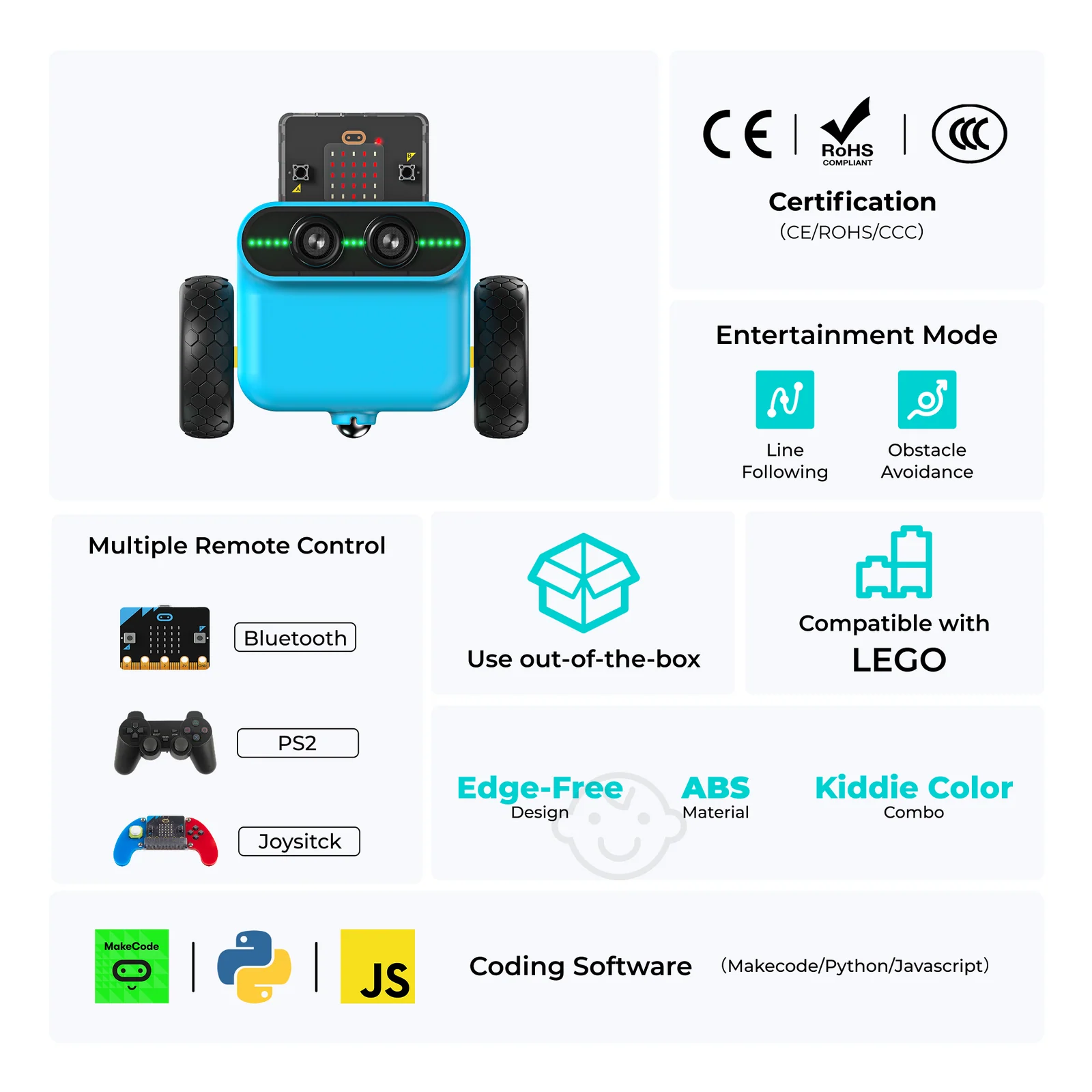

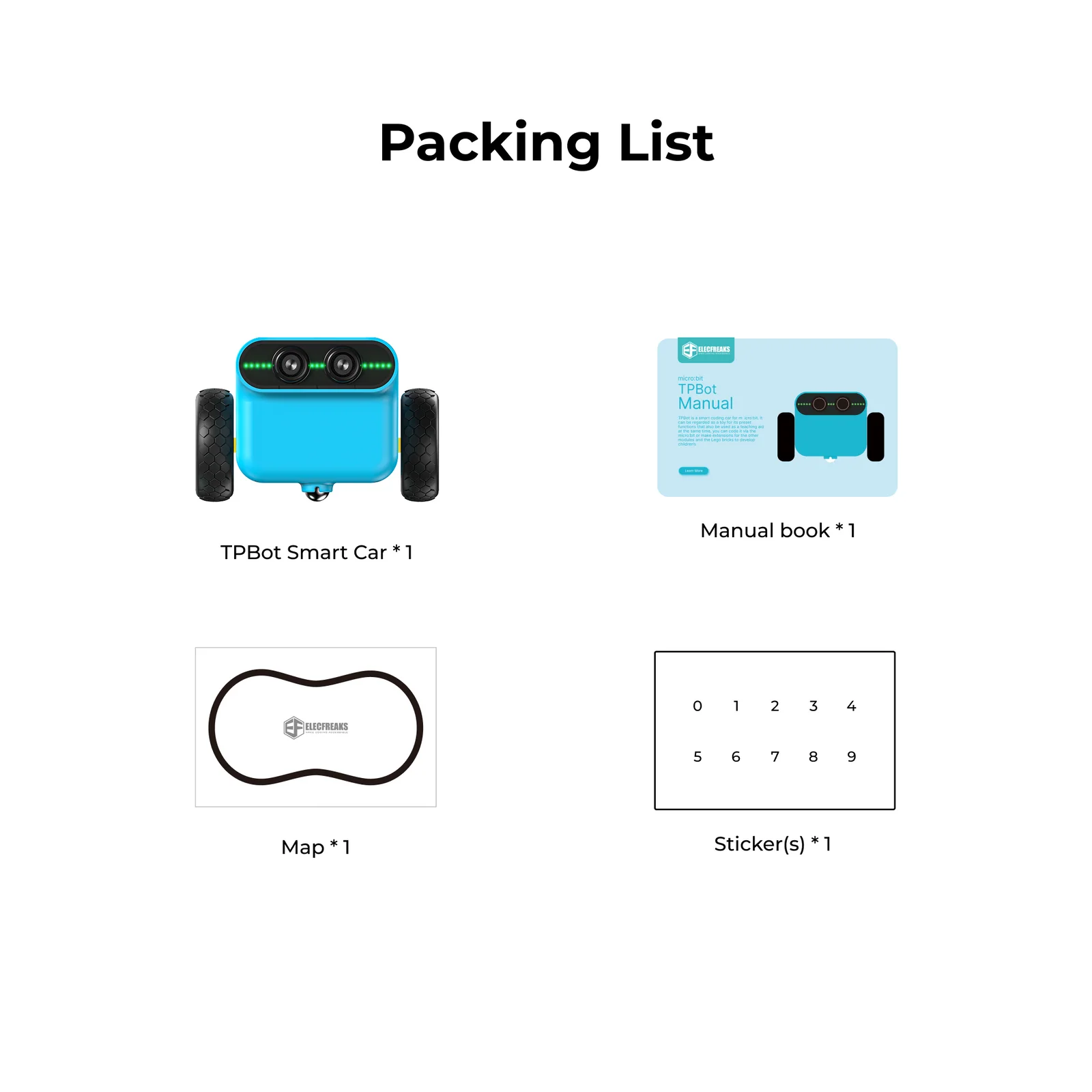

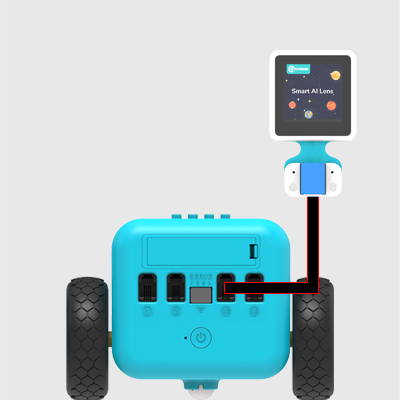

TPBot Car Kit

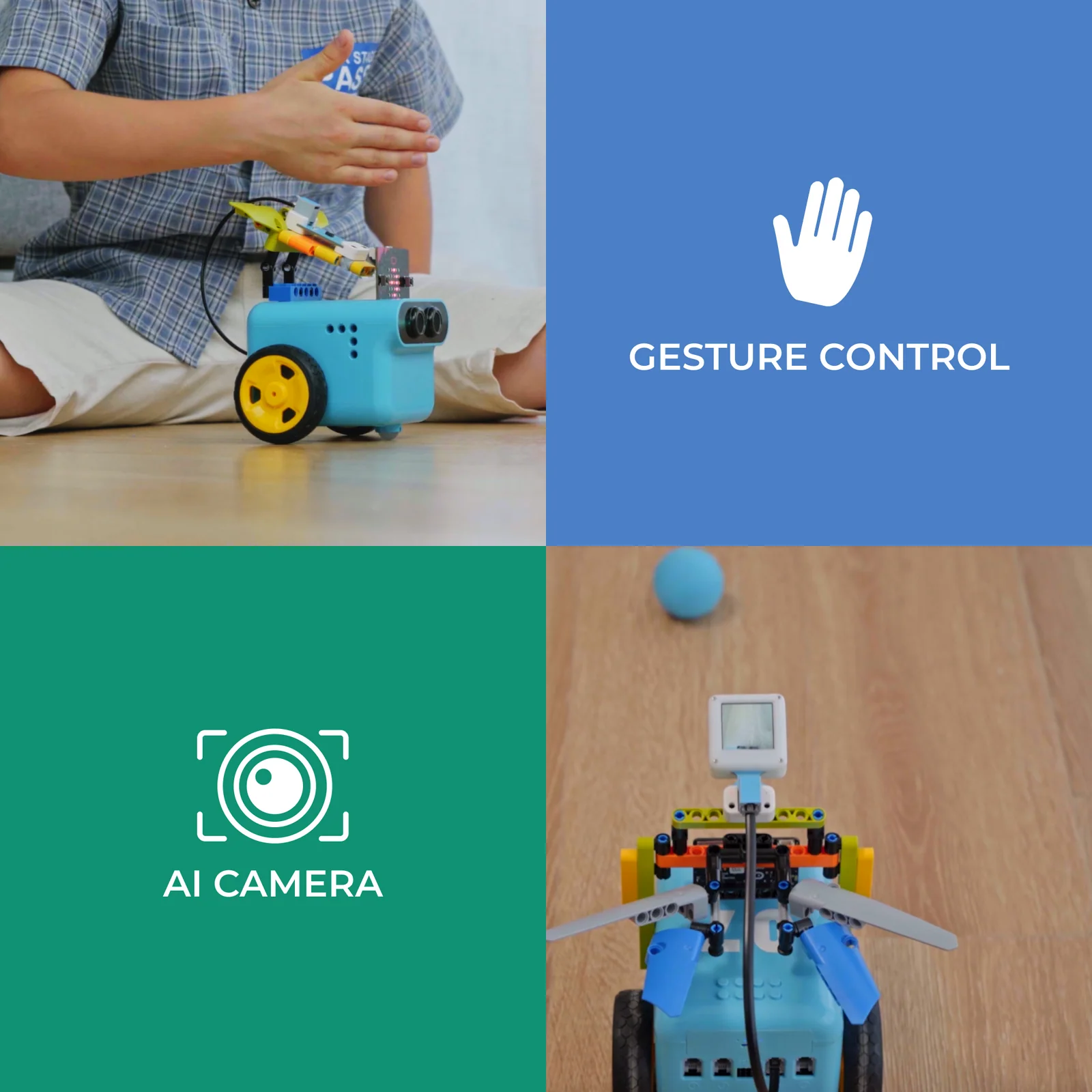

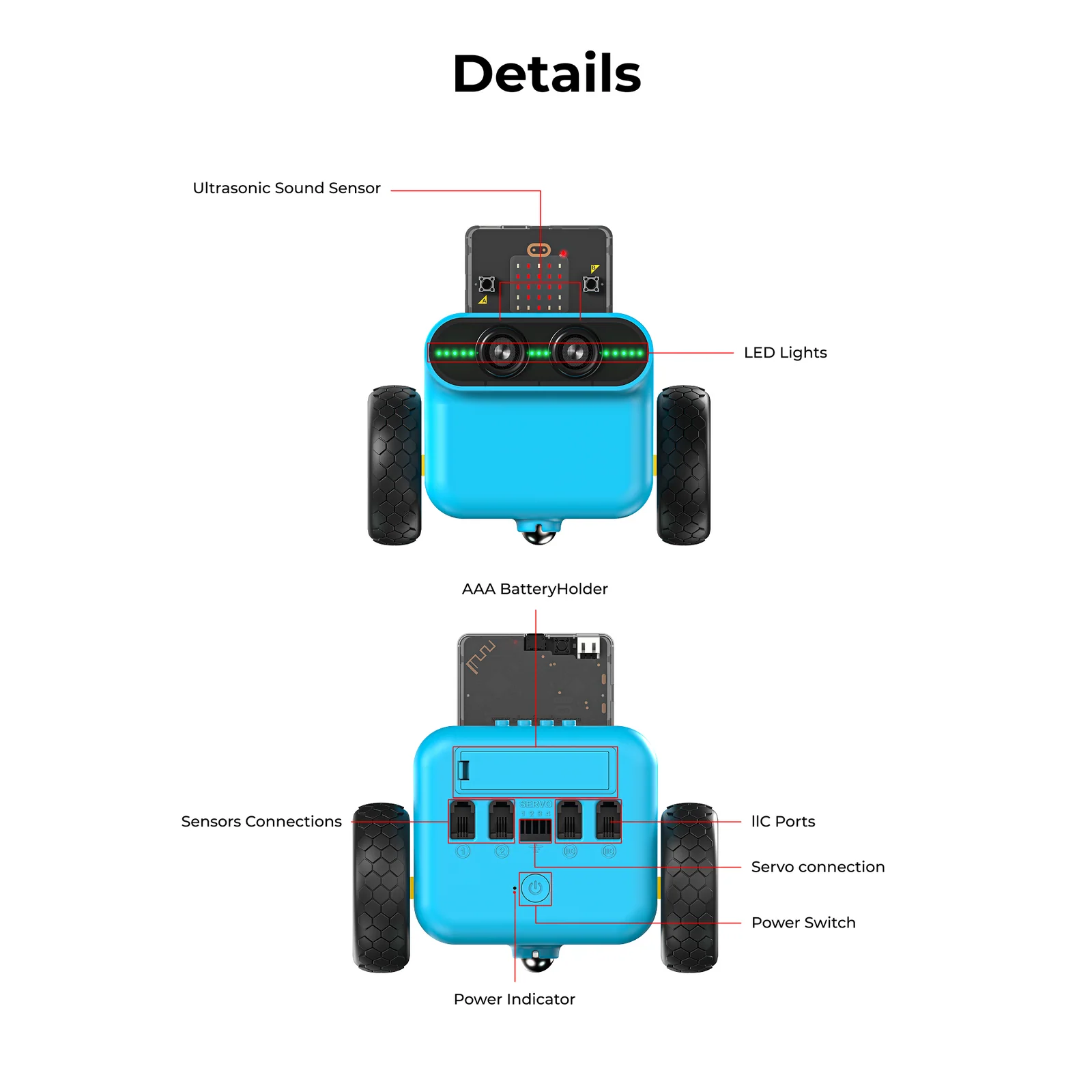

A smart coding car for micro:bit that works both as a standalone toy and a powerful classroom teaching aid. Featuring preset line-tracking and obstacle-avoidance modes, RGB LED headlights, servo expansion ports, and full LEGO brick compatibility — students move from play to programming in minutes.